'Disappointed' Meta pauses AI training in EU & UK | Study reveals consumer fears over AI in news

#8 | Plus: Perplexity 'scrapes websites without consent': WIRED | Ex-OpenAI chief launches push for 'safe superintelligence' | Gen AI fever hits Cannes | AI Lego art

Welcome to this week’s newsletter charting developments in generative AI and the impacts these are having on human-made media.

🏛️ REGULATION & LEGISLATION

META AGREED to pause training its large language models (LLMs) on Facebook and Instagram posts in the EU and UK after complaints filed by privacy group NOYB (“none of your business”) in 11 European countries were backed by the Irish Data Protection Commission (DPC) on behalf of fellow European data protection authorities. Meta expressed its disappointment that the Irish DPC and UK Information Commissioner’s Office (ICO) had requested a delay, saying it represented a “step backwards for European innovation”. NOYB claimed the Irish DPC had U-turned having initially approved the introduction of Meta AI. NOYB chair Max Schrems said: “So far, there has been no official change to the Meta privacy policy that would make this commitment legally binding.” Meanwhile, Helle-Thorning Schmidt, co-chair of Meta’s Oversight Board, told the Financial Times the independent self-regulatory body was urging the social media giant to label AI content rather than automatically remove it since “not all AI-generated content is harmful”. 🔗 Meta statement; NOYB statement; DPC statement; ICO statement; Financial Times

🌟 KEY TAKEAWAY: Last month Meta began informing users it was updating its privacy policy to allow AI training on their public texts and photos. Users had until June 26 to opt out. However NOYB used requirements within Europe’s stringent GDPR rules to argue that Meta should instead have asked users to opt in. “Meta has every opportunity to deploy AI based on valid consent — it just chooses not to do so,” said Schrems, clearly enjoying his David v Goliath giant-toppling moment. Meta has been humiliated, and now faces an uphill battle with emboldened regulators.

ALSO:

➡ The EU has opened its AI Office; The Drum explains what it all means.

🚨 NEWS MEDIA

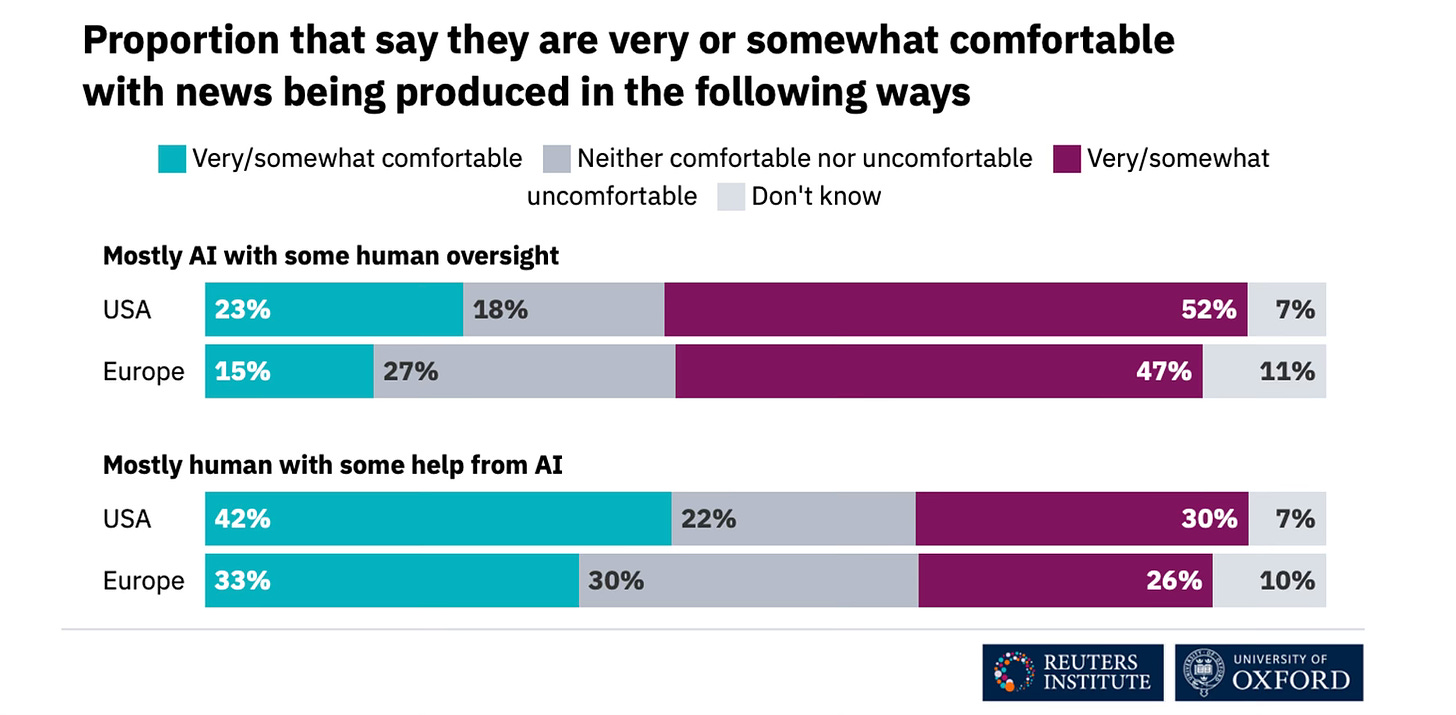

PEOPLE ARE MORE comfortable with generative AI being used by journalists ‘behind the scenes’, making their work more efficient, but are less comfortable with AI being used to deliver the news and least comfortable when it’s used to generate news content. Those were the findings of the Reuters Institute for the Study of Journalism’s latest Digital News Report and the first to probe consumer attitudes towards the use of AI in news. According to its survey in 28 countries and qualitative research in the UK, US and Mexico, just 19% of consumers are comfortable using news made mostly by AI with human oversight while 36% are comfortable using news made by humans with the help of AI. In both cases, American audiences were more relaxed, European audiences less so:

Meanwhile, the BBC released qualitative research conducted in the UK, US and Australia indicating that audiences consider the use of AI in journalism to be “very high risk” due to “concerns about its potential to spread misinformation, deepen societal division, and replace human interpretation and insight”. 🔗 Digital News Report exec summary; Full report; BBC release; Full report

🌟 KEY TAKEAWAY: Nic Newman, lead author of RISJ’s annual report — a ‘must read’ for anyone concerned with the future of news — believes this is a “time of maximum risk for news organisations”. Several have been experimenting with generative AI to reduce costs and increase content personalisation. But as Newman rightly says, “they need to do this without reducing audience trust, which many believe will become an increasingly critical asset in a world of abundant synthetic media”.

ALSO:

➡ Gen AI can help local news survive ‘if implemented in the right way’.

➡ AI “frees journalists to perform high-value work”: Times ed ops chief.

➡ Journalists want transparency on Axel Springer’s deal with OpenAI.

💰 COPYRIGHT & LICENSING

WIRED UNVEILED the findings of an investigation into Perplexity which it said showed the AI-powered search engine is “surreptitiously” scraping websites without their permission. WIRED further claimed Perplexity’s chatbot was prone to making things up — ‘bullshitting’ to use a technical term deployed by academics, hence WIRED’s provocative headline. When WIRED reporters provided Perplexity with headlines of WIRED articles the chatbot closely paraphrased the accompanying stories while “at times summarising stories inaccurately and with minimal attribution”. Perplexity CEO Aravind Srinivas said WIRED’s analysis reflected “a deep and fundamental misunderstanding of how Perplexity and the internet work”. WIRED’s investigation follows last week’s claim by Forbes that Perplexity had ripped off its content. Axios this week reported that Forbes had sent a legal letter to Perplexity stating its copyright had been infringed and demanding Perplexity remove source articles. 🔗 WIRED investigation; Axios

🌟 KEY TAKEAWAY: As the WIRED reporters point out, Srinivas didn’t dispute the investigation’s findings and didn’t respond to their follow-up questions. Forbes gave his company 10 days to reply to its legal letter which further demands it remove source articles, orders it to hand over any advertising revenues generated by the infringement, and seeks confirmation that it won’t breach Forbes’ copyright in future. As we said last week, news orgs need to call out bad behaviour on the part of the AIs and expose wrongdoing. It’s journalism’s superpower.

ALSO:

➡ AI’s copyright problem CAN be solved: tech evangelist Tim O’Reilly.

➡ Photoedit platform Picsart partners with Getty Images for AI imagery.

🤖 TECH WATCH

ILYA SUTSKEVER, the OpenAI co-founder who quit the company last month, announced on X he was starting a new venture called Safe Superintelligence (SSI). “We will pursue safe superintelligence in a straight shot, with one focus, one goal, and one product,” proclaimed Sutskever who co-led OpenAI’s now-disbanded superalignment team with Jan Leike. Leike quit for rival Anthropic shortly after Sutskever’s departure, saying OpenAI’s safety processes had “taken a backseat to shiny products”. Joining Sutskever at SSI is Daniel Gross, who oversaw Apple’s AI push, and Daniel Levy, a former technical staff member at OpenAI. 🔗 Sutskever on X; SSI statement

🌟 KEY TAKEAWAY: In its launch statement SSI takes a shot at the leading AIs’ governance and profit motive, saying its approach would mean “no distraction by management overhead or product cycles” while progress towards superintelligence would be “insulated from short-term commercial pressures”. “This way, we can scale in peace,” adds the statement, an apparent dig at the recent noise and chaos at OpenAI.

ALSO:

➡ OpenAI might alter its governance structure, become a for-profit: report.

➡ Anthropic launches Claude 3.5 Sonnet, ‘raising the bar for intelligence’.

➡ Hacker tells Financial Times he can break the world’s largest AI models.

➡ Snap to blend generative AI with augmented reality to create 3D effects.

➡ Microsoft recalls Recall from Copilot+ PCs after privacy concerns raised.

➡ Ads in Google’s AI Overviews ‘to generate $16.9 billion by 2027’: forecast.

➡ Ex-Baidu CPO launches Genspark, yet another AI-powered search engine.

📣 MARKETING

AI HAS BEEN front and centre at the Cannes Lions festival of creativity with discussions ranging from the potential loss of jobs (reminder: OpenAI CEO Sam Altman believes AI will eventually handle “95% of what marketers use agencies, strategists, and creative professionals for”) to it powering new artworks and advertising formats. Google, Meta, Snap and TikTok showcased their latest adtech and AI tools while OpenAI CTO Mira Murati shared a stage with Accenture Song CEO David Droga to consider the challenges AI poses for human creativity. 🔗 Fortune; AdWeek; Digiday

🌟 KEY TAKEAWAY: Among the comments that caught our eye was a question posed by Brian Yamada, chief innovation officer at creative agency VML. Yamada asked what percentage of a creative work would need to be AI-created for it to require a ‘created with AI’ label? “What if it’s only the background or a portion of the asset? Or just the voice and lip sync but not a fully generated avatar?” Yamada said the ad industry and regulatory bodies were likely to struggle with this question. He’s right.

ALSO:

➡ AI integration should support comms staff, not displace them: WEF.

➡ How can gen AI boost marketing? This academic has three big ideas.

➡ Most US ad agencies use gen AI but adoption barriers linger: Forrester.

➡ Verizon says generative AI will stop 100,000 customers leaving in 2024.

IN BRIEF

📸 Snapper wins award for generative AI images with human-made photo.

💥 Artist claims glitch in Superman shield shows generative AI was used.

🎶 YouTube explains principles for partnering with music industry on AI.

🎸 Meta’s new JASCO model turns chords and beats into full musical tracks.

📽️ Generative movie Eno will have “52 quintillion variations” thanks to AI.

🚨 Pixar-style animation created with Luma’s Dream Machine goes viral.

🤖 Google DeepMind is developing ‘video-to-audio’ AI music soundtracks.

✨ How might gen AI be used for VFX, and what impact could it have?

💬 QUOTES OF NOTE

“We cannot trust tech companies that swear their innovations are so important that they do not need to pay for one of the main ingredients — other people’s creative works. The “better future” we are being sold by OpenAI and others is, in fact, a dystopia. It’s time for creative professionals to stand together, demand what we are owed and determine our own futures.” — Mary Rasenberger, CEO of the Authors Guild, writing in the LA Times

“In just two years, the public has gone from being blown away by AI-generated images to sharing viral social media posts about how to opt out of AI scraping — a concept that was alien to most laypeople until very recently.” — Melissa Heikkilä, senior reporter at MIT Technology Review

“AI can produce jokes, but they aren’t yet very funny. Which I think is evidence that there is still a missing human connection — some level of shared understanding in AI that is not yet quite there.” — Rory Sutherland, vice-chair of Ogilvy UK, talking to the FT ahead of the Cannes Lions festival

“In public statements, gen AI companies are justifying training on people’s work without permission by pointing out that other gen AI companies do the same thing. While true, this is a laughable defence. You don’t avoid speeding fines just because the car ahead of you was speeding.” — Ed Newton-Rex, CEO of Fairly Trained, the non-profit certifying fair training data use, on X

🤔 AND FINALLY …

Amid the hype and frenzied excitement over generative AI a recurring question keeps being asked: what’s the killer application? A Dutch YouTuber has gone some way to answering that: making Lego artwork, one pixel at a time. The Pixelbot 3000 prints Lego pixel art using OpenAI’s DALL-E 3 to generate a source image which is then distilled into a 32x32 grid. A Python program picks the closest colour for each pixel based on Lego’s limited palette. And voila! Beautiful Lego mosaics. The YouTuber, known only as Sten or his online moniker Creative Mindstorms, documents how he brought his brilliant innovation to life in a captivating video. There should be awards for this sort of thing!